G of Alpha

G of Alpha

G of Alpha

G of Alpha

Advancing the frontier of self-regulating intelligence — from adaptive neural network architectures to real-time control systems spanning AI, automation, and physical processes.

64

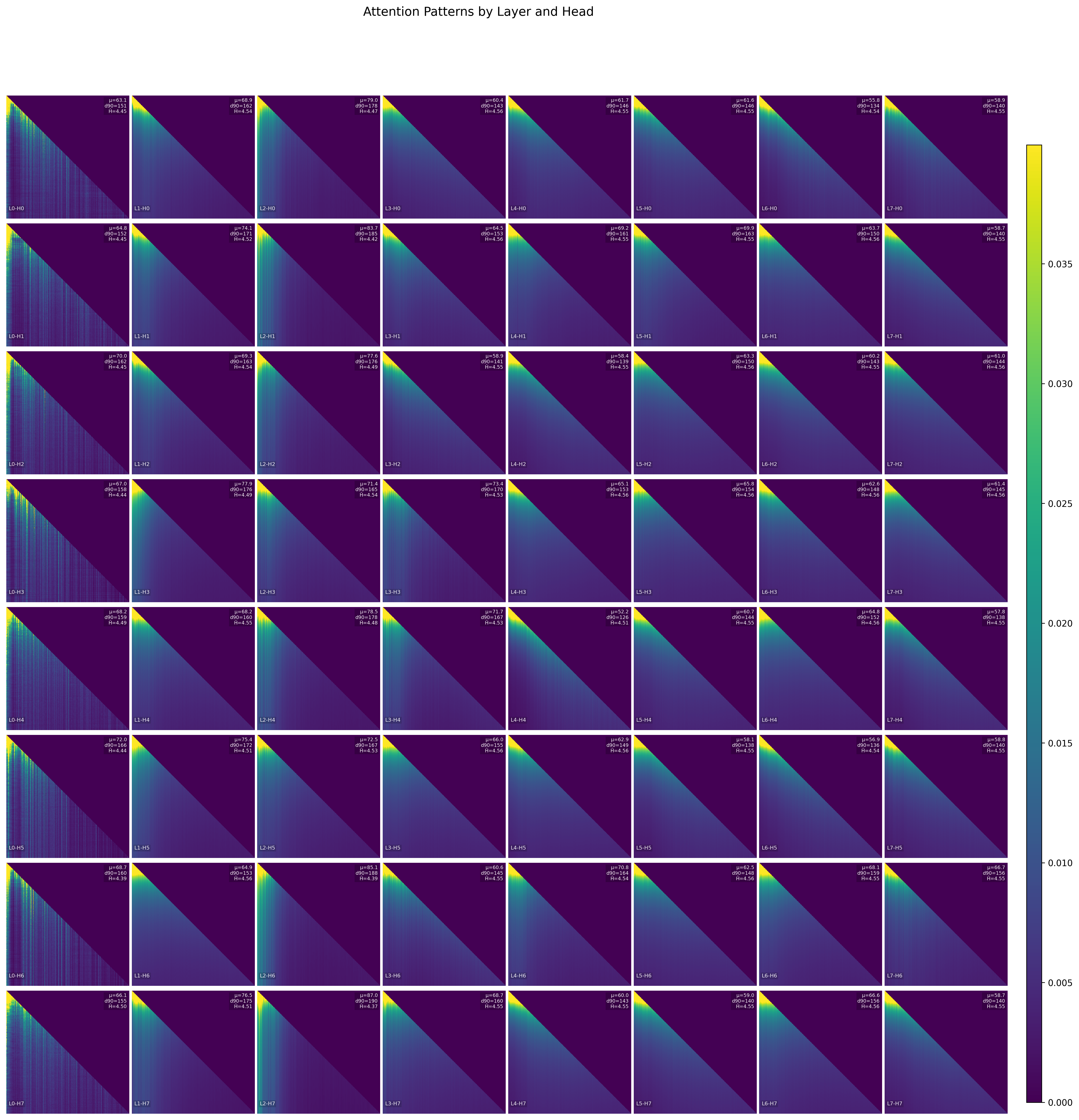

Attention heads — zero collapsed

20K

Steps — zero divergence events

807 MB

Constant memory — no leaks

3

Autonomous regime transitions

Self-Regulating Training Dynamics

We've developed a novel framework that transforms standard neural networks from passive, open-loop systems into active, self-regulating ones. Our models don't just train — they monitor and correct their own internal dynamics in real time.

Standard neural network training is brittle — loss spikes, attention collapse, head redundancy, and hyperparameter sensitivity plague models at many scales. Our approach addresses these problems at their root, producing models that are more robust, more efficient, and require less manual intervention to train successfully.

Demonstrated strategic capabilities

51.5M

Parameters — no chess-specific architecture

All 64 attention heads maintain healthy, non-degenerate distributions — zero collapsed heads

Flagship Demonstration

As a rigorous test of our framework's ability to learn complex sequential structure, we trained a 51.5M-parameter chess model that progresses from garbled output to strategically coherent games — purely from next-token prediction on PGN text, with no chess-specific architecture, move encoding, or board representation.

Chess is one of the most demanding tests of sequential reasoning. Every move must satisfy geometric constraints (piece movement rules), positional constraints (board state across full game history), and strategic constraints (coherent game plans). A model that masters this demonstrates genuine understanding of deep sequential dependencies — not just pattern matching.

The model is publicly available on Hugging Face. Try it yourself.

Developed Research

Role: information tracking and flow control — determines when and how to continue, pause, or modulate.

The Alpha-ML Controller serves as the information flow controller and signal head coordinator. It evaluates each step of the generation process as it happens, using uncertainty metrics and signal activations to determine when and how the system should continue, pause, or modulate its output.

It’s more than a prompt engine — it’s an environment-aware controller that shapes system behavior dynamically. From pacing and confidence to pausing, reflecting, re-engaging, or actuating, the controller gives every output or action a sense of timing, control, and intention.

Alpha-ML’s controller introduces a layer of live intelligence to any system. It doesn’t just generate — it manages process and intent.

Advanced Research

Alpha-Adjust is a lightweight, high-precision control engine that enables systems to self-regulate in response to changing conditions. It provides dynamic output adjustment based on signal deviation — helping intelligent systems remain stable, adaptive, and aligned without the need for manual intervention or retraining.

Built for seamless integration, Alpha-Adjust allows you to enforce operational control through configurable behavioral profiles — such as focused, balanced, or stable — letting you tune the system’s reactivity to match its context. No model modification required.

Alpha-Adjust enables systems to think in response — modulating their behavior on the fly to maintain control under pressure.

Commercial license required for redistribution or embedded integration.

Explore two complementary controllers available via RapidAPI. Both expose public-facing response fields for integration and observability — processing_factor, control_parameter, output_gain, and processed_output.

A high-performance FastAPI-based adaptive control system for signal processing and control optimization. Provides advanced mathematical algorithms for real-time control parameter calculation and signal amplification.

Public fields: processing_factor, control_parameter, output_gain, processed_output

Primary endpoints: POST /calculate, POST /calculate/batch, GET /health

Applies an adaptive scalar gain to a vector of outputs based on provided inputs and parameters. Use one call per signal/channel if needed.

The base API is stateless — your client supplies previous_h.

Primary endpoints: POST /calculate, GET /health